Streamlining the EHR: Interview with Mihai Fonoage at Modernizing Medicine

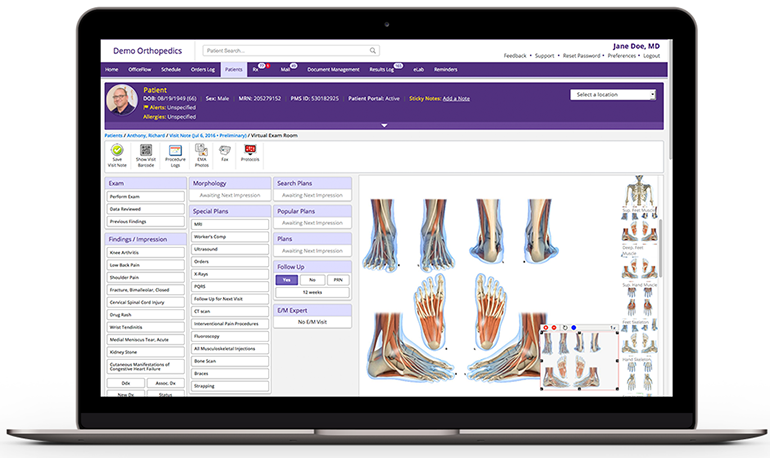

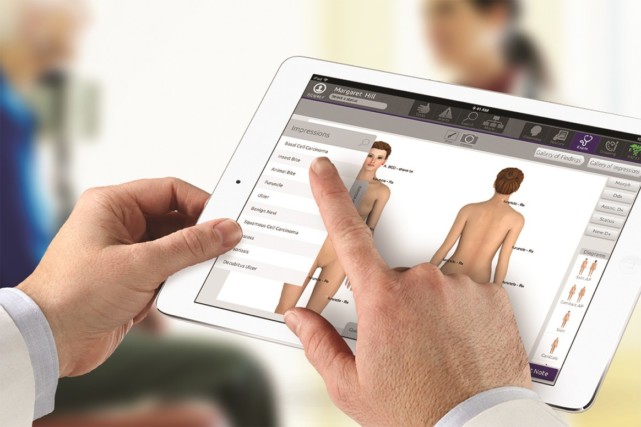

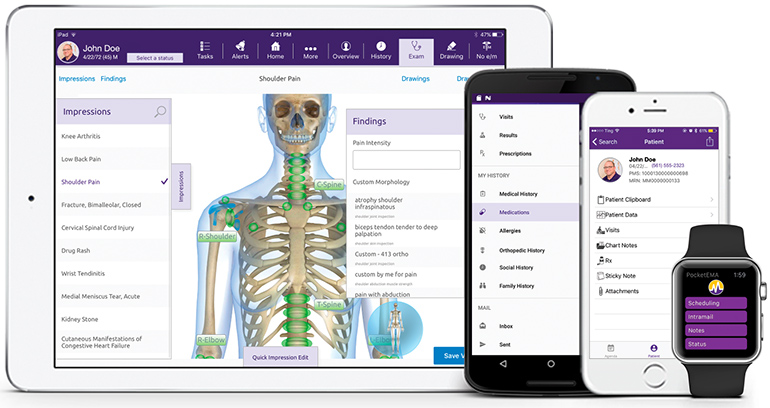

Modernizing Medicine, a medtech company based in Florida, has developed the Electronic Medical Assistant (EMA), an EHR system which the company claims can significantly streamline clinician workflow. Cloud-based and developed by physicians, the EMA aims to intuitively adapt to each individual doctor’s unique style of practice and remembers their preferences. One of the features of the system is intelligence amplification (IA).

Conn Hastings, Medgadget June 19, 2018

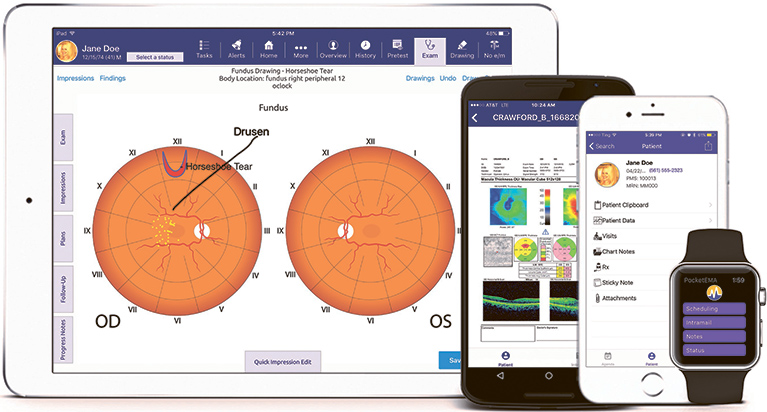

The system also features a voice assistant that allows clinicians to record their speech as text to take patient notes, navigate the EHR, and make corrections using their voice. The speech recognition system is reported to be three to five times faster than typing.

Modernizing Medicine claims that the system helps to reduce clicks and swipes, keystrokes, and manual documentation associated with other EHR systems, which should allow doctors to spend more time face-to-face with their patients. The system also has an inbuilt medical dictionary to recognize specialized terms and phrases and apply correct clinical formatting.

The company’s leadership hopes that in the future they can develop the system to the point that it can almost completely replace the touch components of the EHR, meaning that the AI system could listen to a physician-patient conversation and take patient notes and create reports independently and in real-time.

| Conn Hastings, Medgadget: Please give us a little background on the AI landscape in medical technologies at present. |

Mihai Fonoage, Vice President of Engineering, Modernizing Medicine: Computers can’t replace the human interaction physicians have with their patients, and they also can’t replace the ability to diagnose. So, while artificial intelligence (AI) may not have a place in our exam rooms, intelligence amplification (IA) certainly does. You use IA almost every day without knowing it. For example, when you start to fill out a form online, type a few characters and the form auto-populates with the rest (if you choose to accept your computer’s suggestion). That’s IA.

In today’s medical landscape, all physicians need to use EHRs. Unfortunately, not all EHRs are created equally – especially the legacy systems that don’t implement any form of modern technology like IA. An EHR leverages IA through adaptive learning. For example, the software automatically remembers an individual physician’s preferences and presents them at the top of the list: the most common medications prescribed, the favorite plan for a diagnosis, the sutures most often used, etc. This saves time and frustration by alleviating physicians from having to search for or scroll to find what they commonly would select.

| Is the EHR a significant drain on clinician time at present? |

Many feel that EHRs drain clinician time and contribute to the rising rate of physician burnout. While this may be true in some circumstances, this can’t be generalized across all EHRs. Many have poor user design and force physicians to adopt an unintuitive technology.

Conversely, others put doctors at the center of the user experience during the design and development processes by means of a user-centered design approach and incorporate technology like IA, adaptive learning and voice assist. EHRs built by practicing physicians for others in their specialty help to relieve the burden of time-consuming manual reporting, enabling doctors to spend more time with their patients face-to-face and take less time documenting the visit.

Additionally, with today’s shift to value-based care, it’s imperative for physicians to have an easy-to-use system to help them meet CMS reimbursement requirements. EMA utilizes IA to automatically collect certain quality measures for value-based medicine reporting without the physician having to constantly check boxes

| Please explain the features of the voice recognition and control system available with the Modernizing Medicine EMA EHR. |

Doctors have always loved dictating, and today’s technology that utilizes voice recognition to enter data directly into the EHR can make it extremely simple for the physician. With voice recognition, the physician can talk into the EHR, eliminating the clicks, touches, and burden of manual documentation.

Looking into the future, the next iteration should be able to enable voice recognition programs to collect structured data, enabling systems to make sense of a conversation during a patient visit, while having the medical knowledge to automatically (and correctly) document the appointment. No physician controls needed, yet in the background seamlessly documenting the conversation the physician and patient have.

| How does the system ensure accuracy and security in recording and transmitting clinical information? |

EMA’s technology is Software-as-a-Service (SaaS) and hosted by Amazon Web Services, providing redundant, cloud-based electronic medical record storage. Because data is stored in the cloud, patient information would not be saved on iPads, laptops or desktops. All information is sent securely, fully encrypted, to the Cloud.

Also, since upgrades & enhancements are done in the cloud for our clients, they don’t need to hire staff who may or may not have the education and experience to install them manually.

| How has the system been received so far? Have clinicians found the speech recognition system useful |

While EMA has included voice recognition for years, many of our users find that the intuitive, iPad-based technology is more efficient than voice recognition.

Since practicing physicians built the system specifically with their specialty’s workflow in mind, charting an office visit tends to be fairly easy. But with that being said, some physicians still love dictating and find the voice recognition extremely helpful and comforting.

| What are your plans for future developments of this system? |

Modernizing Medicine implemented IA within its EHR from its inception in 2010, and recently, our team has been working on ways we can take it even further. We’re developing functionality to enable EMA to not only recognize the drugs physicians frequently prescribe to their patients, but whether or not it requires prior authorization, dramatically reducing paperwork and prescription wait time.

Two more very high-impact examples for future development consideration could be medication adherence and predicting no-shows due to the structured data that we possess. The systems are being developed to do this without the physician having to touch the screen. Our main challenge in the short-term is ensuring we’re both innovating for our customers with technology like IA, and also ensuring we continuously update our core solutions so physicians can increase practice efficiency and improve patient outcomes.

| Conn Hastings received a PhD from the Royal College of Surgeons in Ireland for his work in drug delivery, investigating the potential of injectable hydrogels to deliver cells, drugs and nanoparticles in the treatment of cancer and cardiovascular diseases. After achieving his PhD and completing a year of postdoctoral research, Conn pursued a career in academic publishing, before becoming a full-time science writer and editor, combining his experience within the biomedical sciences with his passion for written communication. |

Source Medgadget

Also see

Error rate 7.4 percent in speech recognition-assisted notes Medical Xpress

5 questions for… Nuance – does speech recognition have a place in healthcare? Gigaom

Errors Common in Notes Produced by Speech Recognition Software Medscape

Artificial intelligence is now telling doctors how to treat you Wired